05.18.16

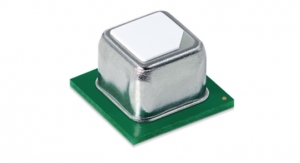

Mobileye and STMicroelectronics announced that the two companies are co-developing the next (fifth) generation of Mobileye’s SoC, the EyeQ® 5, to act as the central computer performing sensor fusion for Fully Autonomous Driving (FAD) vehicles starting in 2020.

To meet power consumption and performance targets, the EyeQ5 will be designed in advanced 10nm or below FinFET technology node and will feature eight multithreaded CPU cores coupled with 18 cores of Mobileye’s next-generation, innovative and well-proven vision processors.

Taken together, these enhancements will increase performance 8x times over the current 4th generation EyeQ4. The EyeQ5 will produce more than 12 Tera operations per second, while keeping power consumption below 5W, to maintain passive cooling at extraordinary performance. Engineering samples of EyeQ5 are expected to be available by first half of 2018.

The EyeQ5 continues Mobileye’s long-standing cooperation with STMicroelectronics. Leveraging its experience in automotive-grade designs, ST will support state-of-the-art physical implementation, specific memory and high-speed interfaces.

Mobileye N.V.’s proprietary software algorithms and EyeQ chips perform detailed interpretations of the visual field in order to anticipate possible collisions with other vehicles, pedestrians, cyclists, animals, debris and other obstacles. Mobileye’s products are also able to detect roadway markings such as lanes, road boundaries, barriers and similar items; identify and read traffic signs, directional signs and traffic lights; create a Roadbook of localized drivable paths and visual landmarks using REM; and provide mapping for autonomous driving. Its products are or will be integrated into car models from 25 global automakers.

“EyeQ5 is designed to serve as the central processor for future fully-autonomous driving for both the sheer computing density, which can handle around 20 high-resolution sensors and for increased functional safety,” said Prof. Amnon Shashua, co-founder, CTO and chairman of Mobileye. “The EyeQ5 continues the legacy Mobileye began in 2004 with EyeQ1, in which we leveraged our deep understanding of computer vision processing to develop highly optimized architectures to support extremely intensive computations at power levels below 5W to allow passive cooling in an automotive environment.”

Autonomous driving requires fusion processing of dozens of sensors, including high-resolution cameras, radars, and LiDARs. The sensor-fusion process has to simultaneously grab and process all the sensors’ data.

Engineering samples of EyeQ5 are expected to be available by first half of 2018. First development hardware with the full suite of applications and SDK are expected by the second half of 2018.

To meet power consumption and performance targets, the EyeQ5 will be designed in advanced 10nm or below FinFET technology node and will feature eight multithreaded CPU cores coupled with 18 cores of Mobileye’s next-generation, innovative and well-proven vision processors.

Taken together, these enhancements will increase performance 8x times over the current 4th generation EyeQ4. The EyeQ5 will produce more than 12 Tera operations per second, while keeping power consumption below 5W, to maintain passive cooling at extraordinary performance. Engineering samples of EyeQ5 are expected to be available by first half of 2018.

The EyeQ5 continues Mobileye’s long-standing cooperation with STMicroelectronics. Leveraging its experience in automotive-grade designs, ST will support state-of-the-art physical implementation, specific memory and high-speed interfaces.

Mobileye N.V.’s proprietary software algorithms and EyeQ chips perform detailed interpretations of the visual field in order to anticipate possible collisions with other vehicles, pedestrians, cyclists, animals, debris and other obstacles. Mobileye’s products are also able to detect roadway markings such as lanes, road boundaries, barriers and similar items; identify and read traffic signs, directional signs and traffic lights; create a Roadbook of localized drivable paths and visual landmarks using REM; and provide mapping for autonomous driving. Its products are or will be integrated into car models from 25 global automakers.

“EyeQ5 is designed to serve as the central processor for future fully-autonomous driving for both the sheer computing density, which can handle around 20 high-resolution sensors and for increased functional safety,” said Prof. Amnon Shashua, co-founder, CTO and chairman of Mobileye. “The EyeQ5 continues the legacy Mobileye began in 2004 with EyeQ1, in which we leveraged our deep understanding of computer vision processing to develop highly optimized architectures to support extremely intensive computations at power levels below 5W to allow passive cooling in an automotive environment.”

Autonomous driving requires fusion processing of dozens of sensors, including high-resolution cameras, radars, and LiDARs. The sensor-fusion process has to simultaneously grab and process all the sensors’ data.

Engineering samples of EyeQ5 are expected to be available by first half of 2018. First development hardware with the full suite of applications and SDK are expected by the second half of 2018.